Recently I got to participate in the MLH Fellowship Program, a 12-Week Long Internship Alternative provided by Major League Hacking. MLH is a Global Community aimed to empower Hackers around the world with Hackathons and Community Events. MLH Fellowship Applications open up thrice a year and they invite Applications for namely three programs: Explorer, Open-Source and Externship.

I applied for being a "Fellow" for the Batch 0 of the Program but I was rejected because of my late application. However, that did not deter me from running in my Application again for the Fall '20 Batch, that introduced me to the "Explorer" Program and I decided that it was better suited for me. After a tough Application Process and a chilled out Interview Process, I was selected as an "Explorer Fellow" for Pod 1.1.3, later named as "Sneaky Skylarks".

How does the Program work?

Fellows who are selected in the Fellowship Program are placed under a Pod and a Pod Leader along with a Professional Mentor. In the Explorer Pod, all the Fellows are placed in a Team of 3 or 4 members and they work on an interesting Hack surrounding a theme for the Sprint. My Pod is named Sneaky Skylarks and it is led by Karan Sheth who is our prime motivation in this Program and the one person helping everyone in building their Hacks and their Technical Skills.

Fellows from Sneaky Skylarks

Every Week we have two Sessions including a Weekly "Show and Tell" where the Fellows showcase their Skills in interesting subjects, that doesn't necessarily concern Technical Skills and all. We complete one sprint every two weeks and every sprint is built around a Hackathon theme that is built around Industrial Talks by Professionals.

This Article concerns on how we built a complete Flutter Application named "Helping Hands" in our first Sprint around "Open Innovation" and nailed the Orientation Hackathon by winning the First Prize.

Building Helping Hands

I was tagged with Yash Khare and Shambhavi Aggarwal as part of Team 4 for the Orientation Hackathon. Since the Hackathon was an Open-Ended Hackathon, we decided on the need of having an Innovative Product at the end.

We started collecting pointers on a variety of applications that we can build and possibly complete at the end of the Sprint. Building on a complex idea and adding-in more complex features would surely have taken us a lot of time and we were looking for a more chilled out development workflow for the first Sprint.

After a lot of Brain-Storming, we finally decided to build an application that would help the Visually Impaired People to better navigate and see around using Artificial Intelligence Models. With this Application, we aimed to develop a one-stop solution to allow the Blind or Partially Blind People to better understand the surroundings around them and to be able to cope with the dynamic world ahead of them.

Stack Attack

After a lot of discussions, we decided to finalize on a Technology Stack built around Flutter for Mobile Application Development and Flask for developing RESTful Application Programming Interfaces (APIs). We decided to leverage Tensorflow and Keras Models to integrate with our Application which can be even run Offline, in case the User does not have access to an Internet Connection.

In the later run of the Project, we also made use of Captionbot and Teachable Machine to prototype the AI Models really quick and integrate them with the existing Application. With the initial vision, we were pretty confident on collaborating on various domains like Application Prototyping with Flutter, RESTful API Development, Data Modelling using Keras and finally, UI/UX Design which was the prime Unique Selling Point (USP) for the Project.

Features

For the Minimal Viable Product (MVP) Idea for the Application, we finalized on having Five Features for the Project that would make the Application more accessible to the Visually Impaired People. It would also leave a future perspective for the Project to be worked on and some valuable Open-Source Contribution to be made over the same.

Live Image Labelling

Live Image Labelling is one of the features that capitalize on Tensorflow, Google's Machine Learning Framework that has been designed to develop and serve models even on Low-Ended Devices like Smartphones. To implement this feature, we made use of the Transfer Learning Concept so that we don't have to train a Custom Model from Scratch.

We used the SSD Mobilenet Model from Keras and set the Trainable Layers to False so that we don’t train them from our side. After successfully modelling the Data, we converted them to a Tflite Model that can be served using a Tflite Plugin.

The Tflite Plugin was induced as a Dev Dependency that will be used to integrate our neural network with the app. The Tflite Plugin was used with a loadModel() function which would load the Image and then use the runModelOnFrame() function to take the Camera Stream Input and generate the inferences henceforth.

Using Google's Text to Speech Module available as a Flutter Dependency, you can hear the inferences generated automatically without needing to read the Text. This made the Application more handy and accessible. The Feature can run completely offline without needing any Internet Connection.

Live Image Captioning

The Live Image Captioning was implemented using Flask Micro Web-Framework and the Captionbot Module provided by Microsoft Cognitive Services. We initially decided to develop and deploy a custom Image Caption Generator by utilizing the Flickr8K Dataset which contains more than 8000 Images for Data Modelling along with the Text Description of the Images.

After initial Data Pre-Processing, we made use of Bilingual Evaluation Understudy BLEU to better evaluate the performance of our model that was made available through a pre-trained VGG-16 Model. Since VGG16 is developed to classify images into 1,000 different classes, we removed the last layer of the network to only extract features from the Image.

After a few rounds of training and testing, we got an appreciable accuracy even though our Dataset was overfitting in the initial stages due to the lesser size of the Dataset. We quickly realized that making it work would be another pain on Cloud, since we didn't have resources, except Heroku, to deploy it with multiple dependencies.

In the end, we decided to keep the Model in our Experimental Backend Approach and went forward with CaptionBot which was easy to use. The Flask API was deployed on Glitch with an Endpoint that maps the Image URL passed from the Client-Side to the Captionbot and returns the Inference as a JSON Message. At the Client Side, we made use of the Imgur API to capture Images and store that on Imgur with the Image URL passed to the Backend Interface accordingly.

Text Extraction via OCR

Users might sometimes need to read text present in a photo they clicked, or have saved in their gallery. Being visually impaired, it might not be easy for them to properly read the text. So we provided a feature for users to click a photo, to select existing photos, and extract all the text from them. This text is them spoken out to the user using the Text-to-Speech functionality.

To make it possible, we made use of the Simple OCR Plugin available in Flutter to quickly prototype our feature. The User can then next open the Text Extraction Screen and tap on it. After selecting a photo, the photo, as well as the text extracted using OCR functionality is displayed in a dialogue, with stop, replay, and pause options and is spoken out to the user as well.

|  |  |  |

| Text Extraction | Option to choose from camera or gallery | Option for stop, replay, pause | Dialog with information |

Currency Detection

The Currency Detection Feature was one of the hallmarks of our Project because we wanted to provide an interface so that the Users don't have to depend on others on identifying the value of the currency. To make this possible, we made use of Teachable Machine to train a Model on an extremely small dataset and convert it later to a Tflite Model. This model can be trained on more data to detect currencies of other countries as well.

The User can just open the currency detection screen and tap on it, and click the photo of the note. Our model can classify low quality or blurred images, or folded notes as well with high accuracy.

The model will classify the image, show a dialogue along with the prediction. For better accessibility, the Text-to-Speech functionality will also speak out the prediction made so the user does not need to actually see the screen for the prediction made.

|  |  |

| Taking a picture | Prediction for a blurred 500INR Note | Prediction for a 20INR Note |

SOS

One of the most important features of the application is the SOS feature. In order to use the feature, a user just needs to enter 3 phone numbers on their phone. To access the feature immediately without much hassle, a user just needs to vigorously shake the phone for 3-4 seconds and an SOS message with the exact location details of the user would be sent to the mobile numbers the user has entered.

With the aid of Telephony we were able to set up the feature without relying on an external API like Twilio.

What we did Differently?

Perhaps the best thing that we learnt after being an MLH Fellow is how we can strictly enforce Git Principles along with the need for a proper Documentation and Feature Build. To make our Project stand-out we used some Developer Methodologies like:

- Using proper Git Commit Messages to document Feature Addition, Bug Fixes and Documentation Updates.

- Making use of a Project Board provided by Github to manage the Feature Development.

- Using Issues and Pull Requests effectively to manage Project Development.

- Add a proper Documentation for the whole Project including a Wiki (if possible).

- Making a great pitch during the Project Demonstration and letting the Project to tell a Story.

From a Development Perspective, we experimented with a lot of External APIs and Plugins to find what fits best for our case. We also dedicated some time in actually planning the Product before building it, so that we do not fill our Project Idea with a lot of features that would become unmanageable with the time.

Possibly the corner-stone of our Application was the UI/UX of the Application which was the brilliant artwork by Shambhavi. We used the minimum number of buttons, but the buttons present were of a large size. For instance, the top half of the screen will be one button, and the bottom half, another button so that a user does not need to precisely click on a particular position.

Every feature, from image labelling to currency detection uses a text-to-speech feature to speak out to the user whatever is detected. Each screen vibrates with different intensity on being opened helping to user navigate. The buttons also have unique vibrations for better accessibility.

Results

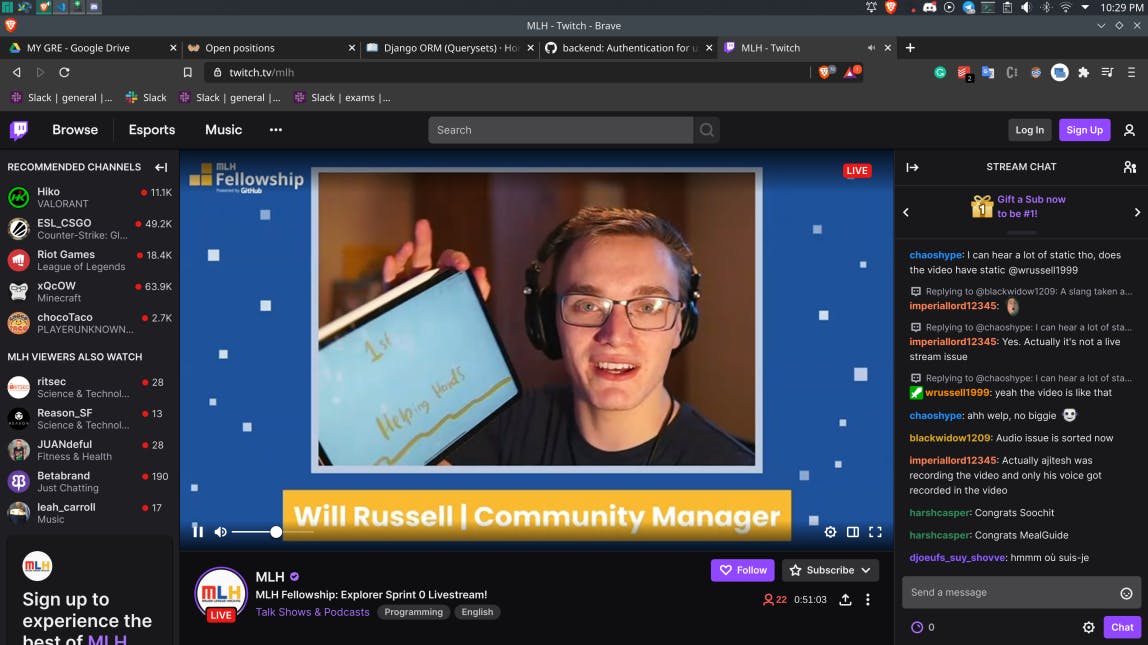

After the final pitches, we decided to patiently wait for the results to kick in at the MLH's Live-Stream on Twitch conducted by Will Russell, the Community Manager at MLH Fellowship, who helped in debugging some of the issues faced by us in Flutter as well.

Check out our Video Pitch

The best part about the Live-Stream was checking out some of the other projects built by our fellow Pod-Mates. We had MealGuide, which was a MERN Application for managing the Diet and acting as a pocket nutritional guide for Students. Soochit was another Application, built around the same Tech Stack that we used (Flutter), that automated Prescription Tracking and reminding about the Medicines that we have to take at regular intervals.

The one Project that simply took us all by surprise was SimpleRx which was built around React, Django and MongoDB and aimed to digitize the Prescriptions and create a Centralized Health Record Management. All of the Teams made their submissions on Devpost and we patiently decided to keep a look-out for the results.

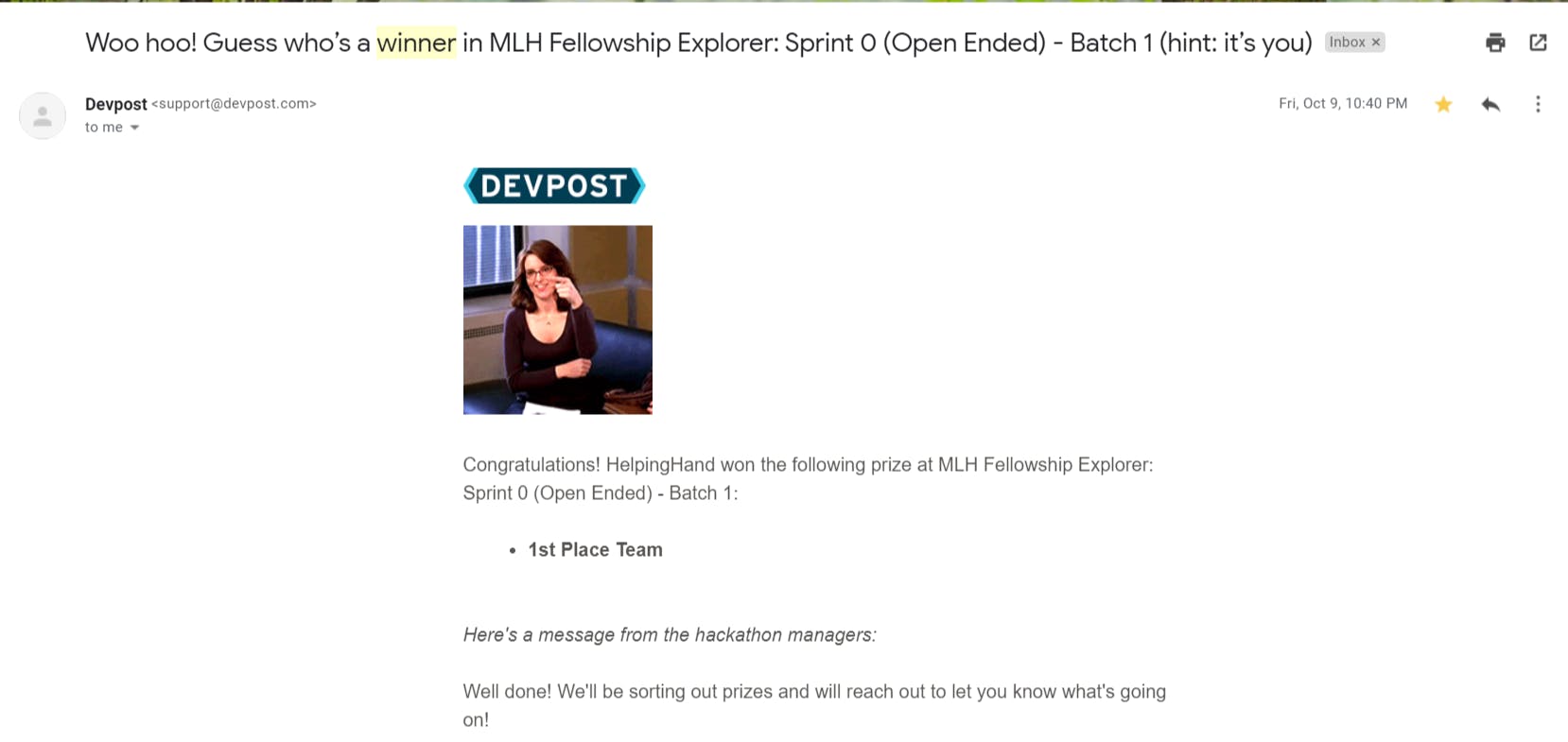

And Yay! We won the First Prize in the Sprint-0 Open-Ended Hackathon! This was a rare happening since it was my first Virtual Hackathon where we actually won with serious participation.

Future Perspective

So, what's the future of Helping Hands?

Currently, the Project is available for Open-Source Contribution on our Github Repository and we are inviting Contributors who can help us in making the Application more User-Friendly and robust.

We still have a few things to iron out of a Hackathon MVP that can be demonstrated, to a Real-Time product MVP that people can possibly make use of. But more than anything, we absolutely loved the Learning Experience and the Team Work we were a part of.

Check out our Project on Devpost and let us know anything in-general on what you like about the Project and any suggestions on how we can improve.

Thanks to Yash and Shambhavi for helping me author this write-up.